How 148 users farmed 750 days of free premium from our referral system — and how we shut it down

이 글은 한국어로도 제공됩니다.한국어로 읽기 →

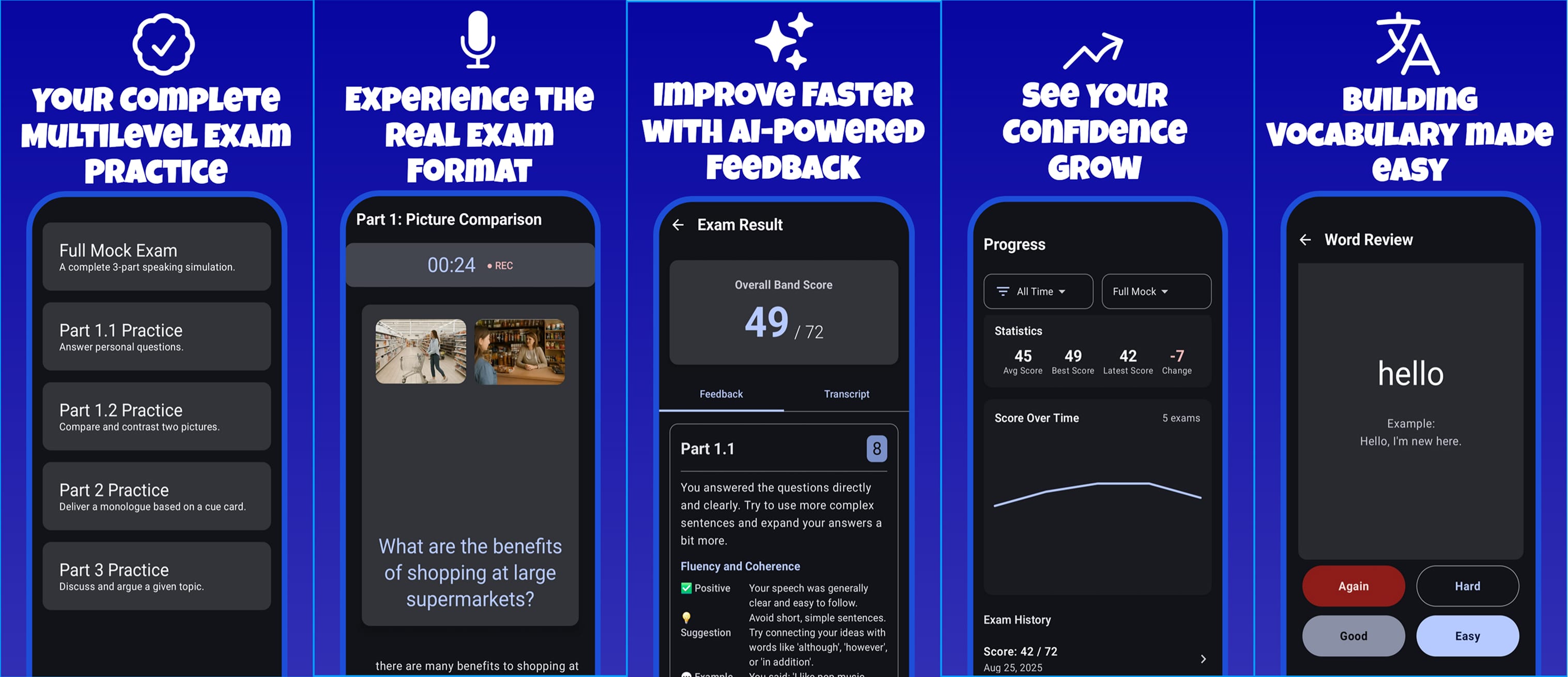

We run Spiko, an AI-powered English exam prep app with ~77K users, mostly students in Uzbekistan. In early 2026, we launched a referral system to grow organically. It worked — 397 referrals, 2.4x better retention than organic users, 91% of them genuine.

It also leaked 750 days of premium to 148 abusers.

This is the full story: what they exploited, what we missed, and the layered defense we shipped.

The referral system

Simple mechanic: share your code, friend signs up with it, both get rewarded. The reward tiers were:

| Tier | Reward | Farming incentive |

|---|---|---|

| 1 | 3 streak freezes | None — not worth faking |

| 2 | 7 days Silver premium | Jackpot |

| 3 | 7-day module unlock | More jackpot |

| 4 | Referral champion badge | Cosmetic |

Later we simplified to 1 referral = 1 day of full premium for both sides. No cap.

Detection (and what we got wrong)

We initially flagged 114 users with a referral_banned column on the users table. But that flag only blocked them from using referral codes — they could still sign in and use the app with all the premium time they'd already earned.

Worse: a banned user could delete their account and re-register with a different Gmail, getting a clean referral_banned = 0.

Their alt accounts — the fake Gmails they'd created to farm referrals — were completely untouched.

We'd banned the action, not the actor.

The fix: Layered defense

We shipped everything in one session — auth bans, deletion persistence, alt detection, premium revocation, and legal updates.

Layer 1: Auth-level ban

Added a single check at every authentication entry point:

export class AccountSuspendedError extends Error {

constructor() {

super("Account suspended for violating Terms of Service.");

this.name = "AccountSuspendedError";

}

}

// In AuthService

private assertNotBanned(user: User): void {

if (user.referral_banned === 1) {

throw new AccountSuspendedError();

}

}

Placed before buildAuthResponse() in:

googleSignIn()— blocks Google sign-intelegramLogin()— blocks Telegram bot sign-intelegramWidgetLogin()— blocks Telegram widget (web)refresh()— kicks already-logged-in users within 1 hour (token expiry)

The route handler returns 403 (not 401) with errorCode: "ACCOUNT_SUSPENDED":

function authErrorResponse(c: AppContext, e: unknown): any {

if (e instanceof AccountSuspendedError) {

return c.json(

{ message: e.message, errorCode: "ACCOUNT_SUSPENDED" },

403

);

}

return c.json({ message: getErrorMessage(e) }, 401);

}

Why 403 matters: our Android AuthInterceptor automatically retries on 401 (token refresh).

A 403 bypasses the retry loop and triggers immediate logout:

// AuthInterceptor.kt

if (response.code == 403 && !isPublicRoute) {

Log.w("AuthInterceptor", "Account suspended (403). Logging out.")

runBlocking { sessionManager.logout() }

return response

}

Using 401 would have caused the app to silently retry in a loop, thinking it was an expired token. 403 tells the client: this isn't an auth problem, you're not welcome here.

Layer 2: Ban persistence across account deletion

When a user deletes their account, we snapshot their identity into a deleted_accounts table:

CREATE TABLE deleted_accounts (

id TEXT PRIMARY KEY,

googleId TEXT,

email TEXT,

telegramId TEXT,

fcmTokens TEXT, -- JSON array of device tokens

referral_banned INTEGER NOT NULL DEFAULT 0,

deletedAt TEXT NOT NULL DEFAULT (datetime('now'))

);

When anyone signs up, we check if their Google ID, email, Telegram ID, or FCM token matches a deleted account. If it does, we restore the ban using MAX():

UPDATE users SET

referral_banned = MAX(referral_banned, ?)

WHERE id = ?;

MAX() is key — it means a ban can only be added, never accidentally removed. If the deleted account had referral_banned = 1 and the new account has referral_banned = 0, MAX(0, 1) = 1 — still banned. Delete and re-register with the same Google account? Still banned.

Layer 3: Alt Account Detection via Referral Graph

The 114 originally banned users were the fake accounts — the pawns. Their main accounts — the puppet masters collecting premium days — were still untouched. We walked the referral graph:

SELECT DISTINCT CASE

WHEN u_referrer.referral_banned = 1 THEN r.referredUserId

WHEN u_referred.referral_banned = 1 THEN r.referrerId

END as alt_id

FROM referrals r

JOIN users u_referrer ON r.referrerId = u_referrer.id

JOIN users u_referred ON r.referredUserId = u_referred.id

WHERE (u_referrer.referral_banned = 1 AND u_referred.referral_banned = 0)

OR (u_referred.referral_banned = 1 AND u_referrer.referral_banned = 0);

First pass: 30 alt accounts found.

Second pass: 4 more second-degree alts.

Third pass: 0 remaining. Clean.

The network wasn't deeply layered — most abusers were running simple one-hop schemes. Final count: 148 banned users (114 + 30 + 4).

Layer 4: FCM device cross-check

Firebase Cloud Messaging tokens are unique per app install per device. When a user registers their device, we now check if that FCM token is associated with any banned user:

// In updateFcmToken()

const bannedDevice = await this.repo.findBannedUserByFcmToken(token, userId);

if (bannedDevice) {

console.warn(

`[Auth] Device shared with banned user: userId=${userId}, bannedUserId=${bannedDevice.id}`

);

await this.repo.banUser(userId);

}

SELECT u.id FROM devices d

JOIN users u ON d.userId = u.id

WHERE d.fcmToken = ? AND u.referral_banned = 1 AND u.id != ?

LIMIT 1;

This catches the scenario: banned user creates new Gmail, signs up on same phone, app sends FCM token → token matches banned user's device → new account auto-banned.

The known limitation: FCM tokens reset on app reinstall. A determined user can uninstall, reinstall, and get a fresh token. But we've raised the cost of abuse from "sign in with another Gmail" (30 seconds) to "uninstall, reinstall, create new Gmail, sign up fresh, and lose all progress" (minutes of effort for a few premium days). Most users are students studying for entrance exams — they're not going to keep grinding that loop.

Layer 5: Premium revocation

4 banned users still had active premium modules. All from referral rewards, not legitimate purchases (subscription_providerId: null). Revoked in one query:

UPDATE users SET

active_modules = '[]',

module_speaking_expiresAt = NULL,

module_writing_expiresAt = NULL,

module_reading_expiresAt = NULL,

module_listening_expiresAt = NULL,

subscription_tier = 'free',

subscription_expiresAt = NULL

WHERE referral_banned = 1

AND active_modules IS NOT NULL AND active_modules != '[]';

Layer 6: Legal coverage

Updated Privacy Policy and Terms of Service in all 3 languages (EN/RU/UZ): Privacy Policy additions:

- Device identifiers used for abuse prevention

- Abuse flags retained after account deletion

Terms additions:

- Explicit prohibition on multi-account creation and referral manipulation

- Account suspension section with specific reasons

- Ban persistence clause: "Suspension status is retained even after account deletion and will be applied to any new account created from the same device or identity."

The data: Was the referral system worth It?

After the cleanup, we ran the numbers. The answer surprised us.

Genuine vs fraudulent

| Tier | Count | % |

|---|---|---|

| Genuine referrals | 363 | 91% |

| Fraudulent referrals | 34 | 9% |

91% of referrals were real people referring real people. The system was working.

Retention: Referred vs organic

| Cohort | 7-day active | 30-day active |

|---|---|---|

| All users | 8.8% | 28.5% |

| Referred users | 20.7% | 37.8% |

Referred users retained at 2.4x the baseline. Someone who joins because a friend told them is more committed than someone who found you on the Play Store.

Where did the premium days go?

| Reward | Total claims | By fraudsters | By legit users | Premium days leaked |

|---|---|---|---|---|

| 7-day Silver premium | 74 | 67 (91%) | 7 | 518 |

| 7-day module unlock | 33 | 32 (97%) | 1 | 231 |

| Streak freezes | 261 | 83 (32%) | 178 | 0 |

| Avatar badge | 22 | 20 (91%) | 2 | 0 |

749 premium days were given away. 693 (93%) went to fraudsters. Only 56 days went to legitimate referrers — roughly 8 weeks of one subscription's worth of premium given away in exchange for hundreds of sticky users. Most paid acquisition channels would kill for that ratio.

The fraud wasn't that the system was too generous. It's that the high-tier rewards attracted abusers who farmed at scale, while legitimate users mostly stayed at tier 1 (streak freezes).

Was the tiered structure the problem?

We briefly switched to a flat 1-day premium reward before shutting referrals down entirely:

| Era | Total | Genuine | Fake | Fraud % |

|---|---|---|---|---|

| Old tiered system | 302 | 275 | 27 | 8.9% |

| New 1-day system | 95 | 88 | 7 | 7.4% |

The fraud rate barely changed. The problem was never the generosity of the reward — it was the tiered escalation. The reward-to-effort ratio had a cliff at tier 2 where 2 minutes of creating a Gmail suddenly bought you 7 days of premium. Under the 1-day system, you'd need a new fake account every day to maintain premium. The economics of farming collapsed.

The multi-account landscape

With referral abuse handled, we checked how many devices were running multiple accounts across the entire platform:

| Accounts per device | Devices | Total accounts |

|---|---|---|

| 2 | 1,619 | 3,238 |

| 3 | 313 | 939 |

| 4 | 109 | 436 |

| 5+ | 63 | 352 |

2,104 devices running multiple accounts — 4,971 accounts out of ~77K users (6.5%). The 5+ cluster (63 devices, 352 accounts) looked suspicious. But when we checked their trial flags and exam usage: all zeros. No trial abuse, no free exam farming. Every multi-account was built for referral farming, and with referrals dead, the accounts were inert.

We considered implementing ANDROID_ID fingerprinting and device-level account restrictions. Then we checked the data and decided not to build it. The problem was already solved. Ten minutes of SQL saved days of engineering.

Architecture summary

SIGN-IN ATTEMPT

- Google / Telegram / Widget

→ assertNotBanned()

→ 403 if banned

→ Token refresh also returns 403 (within 1 hour)

ACCOUNT DELETION

- Delete account → snapshot identity + ban flag

- Re-register with same identity

- If match found → restore ban via MAX()

DEVICE REGISTRATION

- Register FCM token

- If device linked to banned user → auto-ban

REFERRAL GRAPH

- Traverse referral chain

- Ban connected accounts (iterative)

- Revoke fraudulently earned premium

What we learned

- Ban the actor, not just the action. Our initial referral_banned flag only blocked referral code usage. That's like banning someone from the loyalty program but still letting them shop. If someone is abusing your system, lock the door.

- Deletion is an escape hatch. If you ban someone but let them delete and re-create, you haven't banned them. Snapshot identities before deletion and check on re-registration.

- Walk the graph. The 114 banned accounts were the pawns. The 34 accounts collecting premium were the real abusers. Always check both sides of the referral relationship.

- Tiered rewards create cliffs. Streak freezes at tier 1 attracted zero abuse. 7-day premium at tier 2 attracted all of it. The moment you give away the thing people pay for, someone will figure out how to farm it. Keep referral rewards non-fungible with your paid tier.

- 403 vs 401 matters.** Using the wrong HTTP status code caused our Android interceptor to silently retry (thinking it was an expired token) instead of showing the user why they were blocked. Small detail, big behavioral difference.

- Check the data before building defenses. We considered implementing ANDROID_ID fingerprinting, device-level exam quotas, and mandatory notification permissions. Then we checked: the 5+ account cluster had zero trial abuse, zero free exam farming. The only exploit was referral farming, which we'd already killed. Sometimes the best engineering decision is not to build something.

- Don't kill a working growth channel because of a small abuse cluster. The fraud rate was only 9%. 91% of referrals were genuine users who retained at 2.4x baseline. 363 real users with 2x retention for 56 days of premium is an incredible acquisition deal. The system was working — it just needed guardrails, not demolition.

Connect with Azizbek

- LinkedIn: /in/typos-bro

Built by MilliyTechnology. We're building AI-powered exam prep tools for students in Central Asia. If you're working on similar problems, reach out.

If you want to be next and contribute, send us an email at florian@dev-korea.com.

Ready for your next move?

Visit Dev Korea to explore the latest job openings at https://dev-korea.com/jobs, or if you’re hiring, post a job at https://dev-korea.com/post-a-job and connect with our growing international tech community.